Integrates with VS Code through the Copilot Chat interface to provide access to knowledge graphs. Requires an active GitHub Copilot subscription to use MCP features.

Provides a Python-based MCP server for querying Proto-OKN knowledge graphs, with specialized support for FRINK-hosted knowledge graphs and SPARQL endpoints.

MCP Server Proto-OKN

A Model Context Protocol (MCP) server providing seamless access to SPARQL endpoints with specialized support for the NSF-funded Proto-OKN Project (Prototype Open Knowledge Network). This server enables intelligent querying of biomedical and scientific knowledge graphs hosted on the FRINK platform.

Features

🔗 FRINK Integration: Automatic detection and documentation linking for FRINK-hosted knowledge graphs

🧬 Proto-OKN Ecosystem: Optimized support for biomedical and scientific knowledge graphs including:

SPOKE - Scalable Precision Medicine Open Knowledge Engine

BioBricks ICE - Chemical safety and cheminformatics data

DREAM-KG - Addressing homelessness with explainable AI

SAWGraph - Safe Agricultural Products and Water monitoring

Additional Proto-OKN knowledge graphs - Expanding ecosystem of scientific data

⚙️ Flexible Configuration: Support for both FRINK and custom SPARQL endpoints

📚 Automatic Documentation: Registry links and metadata for supported knowledge graphs

Architecture

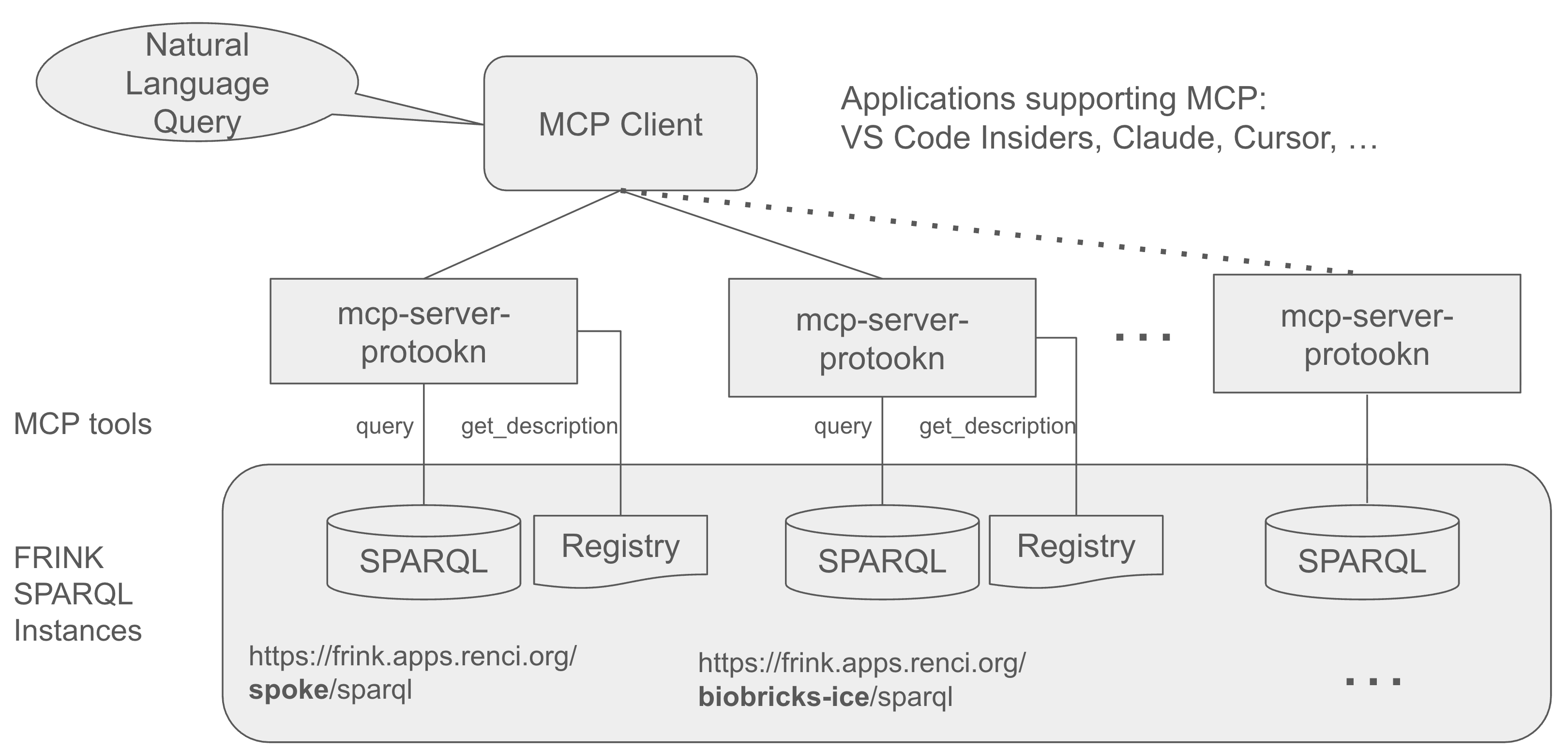

The MCP Server Proto-OKN acts as a bridge between AI assistants (like Claude) and SPARQL knowledge graphs, enabling natural language queries to be converted into structured SPARQL queries and executed against scientific databases.

Prerequisites

Before installing the MCP Server Proto-OKN, ensure you have:

Operating System: macOS, Linux, or Windows

Client Application: One of the following:

Claude Desktop with Pro or Max subscription

VS Code Insiders with GitHub Copilot subscription

Installation

Initial Setup

Install uv Package Manager

The

uvpackage manager is required for Python dependency management:# macOS/Linux curl -LsSf https://astral.sh/uv/install.sh | sh # Windows powershell -c "irm https://astral.sh/uv/install.ps1 | iex" # Alternative: via pip pip install uvNote: Python installation is not required.

uvwill automatically install Python and all dependencies.Verify Installation Path (macOS only)

which uvIf

uvis not installed in/usr/local/bin, create a symbolic link for Claude Desktop compatibility:sudo ln -s $(which uv) /usr/local/bin/uvClone and Setup Project

git clone https://github.com/sbl-sdsc/mcp-proto-okn.git cd mcp-proto-okn uv syncCreate a

The tool runs in a self-contained environment managed by uv.

uv tool install $HOME/path_to_git_repo/mcp-proto-okn uv tool list

Claude Desktop Setup

Recommended for most users

Download and Install Claude Desktop

Visit https://claude.ai/download and install Claude Desktop for your operating system.

Requirements: Claude Pro or Max subscription is required for MCP server functionality.

Configure MCP Server

macOS

cp claude_desktop_config.json "$HOME/Library/Application Support/Claude/"Windows

copy claude_desktop_config.json %APPDATA%\Claude\claude_desktop_config.jsonRefer to the Claude documentation for details.

Note: If you have existing MCP server configurations, merge the contents instead of overwriting.

Restart Claude Desktop

After saving the configuration file, quit Claude Desktop and restart it. The application needs to restart to load the new configuration and start the MCP server.

Verify Installation

Launch Claude Desktop

Click "Connect your tools to Claude" at the bottom of the interface

Click "Manage connectors"

Verify that

mcp-proto-okntools appear in the connector list

VS Code Setup

For advanced users and developers

Install VS Code Insiders

Download and install VS Code Insiders from https://code.visualstudio.com/insiders/

Note: VS Code Insiders is required as it includes the latest MCP (Model Context Protocol) features.

Install GitHub Copilot Extension

Open VS Code Insiders

Sign in with your GitHub account

Install the GitHub Copilot extension

Requirements: GitHub Copilot subscription is required for MCP integration.

Configure Workspace

Open VS Code Insiders

File → Open Folder → Select

mcp-proto-okndirectoryOpen a new chat window

Select Agent mode

Choose Claude Sonnet 4 model for optimal performance

The MCP servers will automatically connect and provide knowledge graph access

Configuration

The server comes pre-configured with 10 Proto-OKN SPARQL endpoints. You can customize the configuration by editing the appropriate files:

Claude Desktop:

claude_desktop_config.jsonVS Code:

.vscode/mcp.json

Adding Custom Endpoints

To add additional Proto-OKN endpoints or third-party SPARQL endpoints, modify the configuration file. The snippet below shows how to specify FRINK and third-party endpoints.

Note: For VS Code configuration (

.vscode/mcp.json), replacemcpServerswithservers.

Quick Start

Once configured, you can immediately start querying knowledge graphs through natural language prompts in Claude Desktop or VS Code chat interface.

Example Queries

Knowledge Graph Overview

Provide a concise overview of the SPOKE knowledge graph, including its main purpose, data sources, and key features.Multi-Entity Analysis

Antibiotic contamination can contribute to antimicrobial resistance. Find locations with antibiotic contamination.Cross-Knowledge Graph Comparison

What type of data is available for perfluorooctanoic acid in SPOKE, BioBricks, and SAWGraph?

The AI assistant will automatically convert your natural language queries into appropriate SPARQL queries, execute them against the configured endpoints, and return structured, interpretable results.

Usage

Command Line Interface

The MCP server can be invoked directly with the following parameters:

Required Parameters:

--endpoint: SPARQL endpoint URL (e.g.,https://frink.apps.renci.org/spoke/sparql)

Optional Parameters:

--description: Custom description for the SPARQL endpoint (auto-generated for FRINK endpoints)

Example Usage

API Reference

Available Tools

query

Executes SPARQL queries against the configured endpoint.

Parameters:

query_string(string, required): A valid SPARQL query

Returns:

JSON object containing query results

get_description

Retrieves endpoint metadata and documentation.

Parameters:

None

Returns:

String containing endpoint description, PI information, funding details, and related documentation links

License

This project is licensed under the BSD 3-Clause License. See the LICENSE file for details.

Citation

If you use MCP Server Proto-OKN in your research, please cite the following works:

Related Publications

Nelson, C.A., Rose, P.W., Soman, K., Sanders, L.M., Gebre, S.G., Costes, S.V., Baranzini, S.E. (2025). "Nasa Genelab-Knowledge Graph Fabric Enables Deep Biomedical Analysis of Multi-Omics Datasets." NASA Technical Reports, 20250000723. Link

Sanders, L., Costes, S., Soman, K., Rose, P., Nelson, C., Sawyer, A., Gebre, S., Baranzini, S. (2024). "Biomedical Knowledge Graph Capability for Space Biology Knowledge Gain." 45th COSPAR Scientific Assembly, July 13-21, 2024. Link

Acknowledgments

Funding

This work is supported by:

National Science Foundation Award #2333819: "Proto-OKN Theme 1: Connecting Biomedical information on Earth and in Space via the SPOKE knowledge graph"

Related Projects

Proto-OKN Project - Prototype Open Knowledge Network initiative

FRINK Platform - Knowledge graph hosting infrastructure

Knowledge Graph Registry - Catalog of available knowledge graphs

Model Context Protocol - AI assistant integration standard

Original MCP Server SPARQL - Base implementation reference

For questions, issues, or contributions, please visit our

This server cannot be installed

remote-capable server

The server can be hosted and run remotely because it primarily relies on remote services or has no dependency on the local environment.

A Model Context Protocol server that provides tools for querying SPARQL endpoints, with specialized support for Proto-OKN knowledge graphs hosted on the FRINK platform.

Related MCP Servers

- -securityAlicense-qualityA Model Context Protocol server that enables LLMs to interact with GraphQL APIs by providing schema introspection and query execution capabilities.Last updated -5201MIT License

- -securityFlicense-qualityA Model Context Protocol server that enables LLMs to interact with GraphQL APIs by providing schema introspection and query execution capabilities.Last updated -1

- AsecurityAlicenseAqualityA Model Context Protocol server that provides read-only access to Ontotext GraphDB, enabling LLMs to explore RDF graphs and execute SPARQL queries.Last updated -28GPL 3.0

- -securityAlicense-qualityA Model Context Protocol server for MarkLogic that enables CRUD operations and document querying capabilities through a client interface.Last updated -MIT License